Hand Pose Recognition for Activities of daily living tasks using wearable stretchable sensor for Telerehabilitation

DOI:

https://doi.org/10.37934/sej.13.1.168189Keywords:

Stretchable Sensor, Hand Pose Recognition, Machine Learning, ADL tasks, TelerehabilitationAbstract

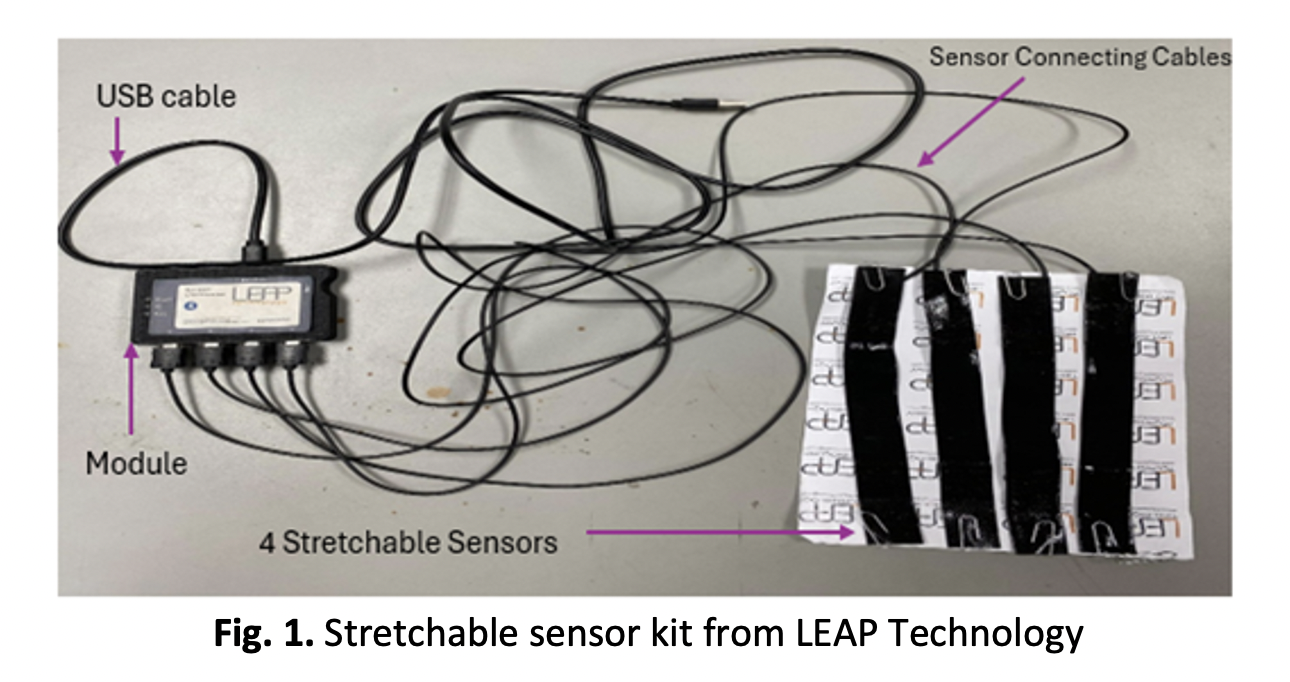

The recognition of hand poses during activities of daily living (ADLs) in post-stroke patients using wearable technologies offers greater precision and detail compared to conventional clinical assessments. This study aims to deploy wearable stretchable sensors to evaluate the accuracy of detecting hand poses for six different ADLs using machine learning (ML) algorithms. The selected tasks are derived from the Motor Activity Log (MAL), a clinical assessment tool used by therapists to quantify post-stroke recovery. An initial study involving 20 healthy subjects is conducted to determine the best sensor configuration for capturing distinct hand poses. Four sensor placement configurations are considered: thumb, index finger, middle finger, and wrist (TIMW); thumb, index finger, middle finger, and back of the hand (TIMB); thumb, index finger, wrist, and back of the hand (TIWB); and all fingers (thumb, index, middle, and ring fingers). The t-Distributed Stochastic Neighbour Embedding (t-SNE) technique is utilized to visualize clustering patterns, with the TIMW configuration demonstrating the most distinct separation of ADL tasks. Data from the four sensors are acquired and processed offline. Support Vector Machine (SVM), K-Nearest Neighbour (KNN), and Random Forest (RF) classifiers are implemented to recognize the six ADLs, achieving accuracies of 97.22%, 95.83%, and 46.15%, respectively. Compared to previous researches utilizing IMUs and flex sensors, the proposed stretchable sensor system demonstrates a promising performance. Future work will extend this study to post-stroke survivors to further validate the system’s effectiveness in rehabilitation settings.